After playing around with a cheap USB MIDI breath control pressure sensor, I got thinking of adding some innovation with some vocalization detection, perhaps kazoo line humming or a throat whistle. Any reliable vocalization recognizance could be used in some way to add another control output.

I ran across one technique used in YIN which is said to be very tolerant of noise, like a breathy mic. This detector is used a lot in speech processing but also for singers. (There appeared to be some copyright issues)

I later found pYIN (Probabilistic YIN) which is supposed to be an improvement, and the developer university only requires mention in your projects. I found C++ source as well as a Python archive.

Their fundamental frequency detect demo

Looking to install it on my desktop, I discovered I already had it in Sonic Visualizer.

Aside from the Blue-green voice waveform there is there is the blue continuous frequency trace and Notes indicated by red boxes. (It’s a little hard to distinguish the voice humming into a hose, from the detector’s superimposed instrument sound.)

Another Kazoo note detecting test, using a “how to kazoo like a sax” tutorial, a standin for vocalization into a breath controller.

While YIN was I believe capable of real time detection, pYIN is only used as an analysis plugin, apparently a 2 pass analysis is involved. My 10 year old low end desktop detects notes in 45 min clip in 8 seconds . Perhaps a reworked version of pYIN doing small segment analysis can operate near real time on a little Arm processor.

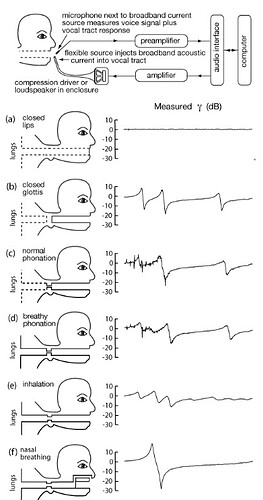

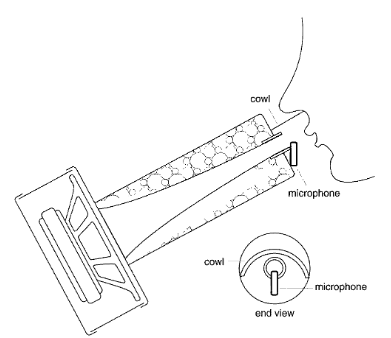

Another approach for adding telemetry to a wind controller may be detecting voice shaped wind noise.

I hoped the pink noise spectrum would reveal the dominant pitch of the wind sound effect, but the frequency variations go far beyond the sound one hears.

It’s interesting to note that someone wrote a machine language 10 bin FFT for the original 8 bit Arduino AVR processor. It appears in a demo for the Adafruit Circuit Playground, an educational board with lots of gadgets including a mic. and big banana/aligator clip connection pads. The trouble with FFT is the linear bin spacing, which doesn’t match up well with musical scales.

. . . . . . . . . . . .

I first looked into vocalization research when considering the possibilities of making a better electrolarynx for those poor cancer patients who lost their voice box. The simple buzzer gives gives a dehumanized vocoder-like SciFi Cylon sound. I thought with a few buttons one could change pitch for a more natural intonation, unfortunately the electrolarynx is not generating a tone, rather it’s palpating the throat, perhaps some resonant harmonics in the throat could be mined. There is an alternative “esophageal voice” involving sticking a hose down one’s throat to pipe in speech synthases.

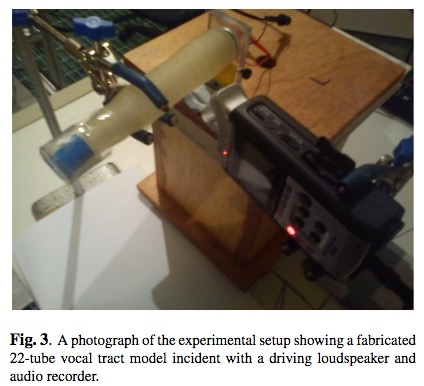

You can’t tell how deep a PhD given a bone wrapped in a grant will dig, there are countless Publications in voice and music acoustics examining the human vocal tract, done by speech synthesizer designers and singing studies.

A missing element from MIDI Wind Instruments

Studies of Saxophone , didgeridoo players and Sopranos. show thee importance of tuning one’s vocal tract for the most pure instrument tones.

If the instrumentation technology used to study the vocal tract could be incorporated into a MIDI controller…

Sonar is probably out, perhaps the spectral analysis of a white noise would expose the resonance of the throat.

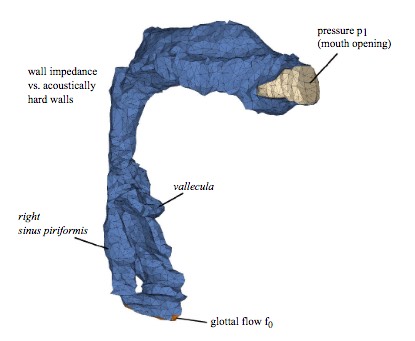

3D model (CT scan?) of vocal tract.